Think of the rulebook on any cam platform as its terms of service – the official list of dos and don'ts. But let's be real: these rules aren't some lofty moral code. They're shaped almost entirely by business priorities. We're talking about keeping payment giants like Visa and Mastercard happy, looking "brand-safe" for any potential advertisers, and a constant, frantic effort to dodge public scandals.

The Hidden Rules of the Game: Understanding Platform Moderation

Let's not sugarcoat it. Most platform rulebooks are a nightmare. They're dense walls of corporate jargon and vague warnings, leaving frustrating grey areas that trip up even veteran models. But if you want to build a career that lasts and avoid the dreaded ban hammer, you have to learn to read between the lines.

The first penny to drop is realising that moderation has very little to do with morality. It’s all about business. These platforms are companies performing a constant, delicate balancing act. They need to give creators enough freedom to be entertaining and pull in a paying crowd, but they also have to satisfy the rigid, non-negotiable demands of their financial partners.

Why The Rules Really Exist

Instead of a moral compass, think of a platform's rules as a risk-management spreadsheet. The folks with the real power aren't the site owners; they're the payment processors and banks handling every single transaction. These financial giants have their own strict codes of conduct and will drop a cam platform like a hot rock if they spot content that could tarnish their squeaky-clean brand image.

This dynamic creates a permanent tug-of-war between two opposing forces:

- Creative Freedom: Your ability to express yourself, find your niche, and build a genuine connection with your audience.

- Platform Control: The platform's desperate need to keep its service "clean" enough to keep the credit card companies happy and stay ahead of changing laws.

At its heart, the central conflict for any creator is this: you are an independent entrepreneur building a business inside a corporate ecosystem you don't control. Your brand is always subject to their brand's risks.

It All Comes Down to the Business Model

Every single rule, from what you can wear (or not wear) to the words you can type in chat, is a direct result of this tension. It's not a judgment on your performance; it's a reflection of the business model you've opted into. The platforms that give you access to a global audience also have to play by mainstream corporate rules. For a closer look at these structures, check out our guide on how different adult streaming platforms work.

Getting a handle on these "unwritten" rules—by understanding the why behind the official Terms of Service—is the key to longevity. It means you can create with confidence, knowing you're navigating not just the explicit list of demands, but the implicit boundaries that really govern the space. It’s about playing the game strategically to keep your account safe and your income flowing.

The Content Red Lines That Get You Banned or Flagged

Of course, anything illegal will get you booted instantly, and rightly so. But beyond that, there’s a whole universe of grey areas and platform-specific tripwires that catch even seasoned creators out. These are the subtle violations of the moderation rules on cam platforms that lead to confusing warnings, suspensions, or a career-ending permanent ban.

It’s crucial to understand that many of these rules aren't about protecting you or the viewer. They exist to protect the platform's ability to process payments. If something looks remotely risky to Visa or Mastercard, it’s going to be scrutinised heavily.

Financial Foul Plays

It’s not just about what you do on camera; how money moves is a massive focus for moderation teams. They’re constantly hunting for anything that smells like fraud or might lead to a customer demanding a chargeback.

Common financial tripwires include:

- Tip Farming: Encouraging users to send lots of small, rapid tips for reasons other than your performance. To a payment processor's algorithm, this can look like automated bot activity, a huge red flag.

- Off-Platform Solicitation: Pushing your audience to pay you through external channels like PayPal or Cash App is a cardinal sin. The platform needs its cut, and diverting traffic away from their system is a fast track to a ban.

- Misleading Tip Goals: Setting a tip goal for a specific act and then not following through is a surefire way to get a flood of user complaints. Platforms see this as a potential scam that creates a customer service nightmare they have to clean up.

Content and Conduct That Crosses The Line

This is where things get subjective and, frankly, frustrating. What one platform considers "harmful" can feel incredibly vague. Worse, these rules can change overnight, usually after bad press or a quiet policy shift from a payment provider.

The core principle is simple: if it creates a legal or financial headache for the platform, it’s on the chopping block. Your creative freedom will always play second fiddle to their risk management.

Think of it this way: certain kinks, while perfectly legal between consenting adults, are labelled "high-risk" by financial institutions. This often includes themes that could be misinterpreted or appear non-consensual, even if they are completely staged and consensual.

The Grey Areas That Get Accounts Flagged

Let's break down some real-world examples that frequently land creators in hot water:

- "Age-Play" Scenarios: Any performance that could possibly be perceived as involving minors is an absolute no-go, even if everyone involved is a verified adult. Both automated systems and human moderators have zero tolerance for this. There are no second chances.

- Extreme or "Risky" Fetishes: Content involving simulated violence (even when consensual), certain bodily fluids, or specific types of humiliation can fall foul of the "harmful content" clause. Again, this isn't about legality; it's about what a credit card company is willing to be associated with.

- Banned Keywords: Every platform has an internal, often secret, list of banned words related to illegal acts, extreme kinks, and off-platform payment methods. Using one in your stream title, bio, or chat can trigger an automatic flag, no matter your intent.

- Featuring Unverified People: Bringing anyone onto your stream who hasn't completed the platform's strict age and identity verification is a massive violation. For the platform, this is a huge legal liability, and they enforce this rule without exception.

To stay safe, you have to learn to think like a platform's compliance officer. Before you do something, ask: "Could this be misinterpreted in the worst possible way by a bank or a journalist?" If the answer is even a maybe, you're probably stepping on a landmine.

How Moderation Works Behind The Scenes

Ever get that feeling you’re being watched? On a cam platform, you are. But it's not some shadowy figure in a dark room; it's a complex, often clunky, hybrid of tireless AI bots and overworked human moderators. Getting your head around this system is crucial to understanding why a stream that was fine yesterday might suddenly land you a warning today.

The first line of defence is always automated. Think of it as a digital bouncer that never sleeps, constantly scanning every broadcast in real-time. This AI isn't watching for entertainment—it's programmed to hunt for specific rule violations.

It's on the lookout for visual cues, like certain props or gestures. It "listens" for banned keywords in the chat and even analyses tipping patterns for fraud. The second it thinks it's found a breach of the moderation rules on cam platforms, it flags the account for review.

The AI Gatekeepers

These automated systems are incredibly fast, but they aren't clever. They operate on a rigid set of instructions and have no grasp of context, nuance, or irony. This is why so many creators get hit with baffling, out-of-the-blue flags.

Common triggers for an AI flag include:

- Keyword Violations: You might use a word from the platform’s secret banned list, even innocently.

- Visual Mismatches: An object in your background could be misidentified by the AI as something prohibited.

- Payment Anomalies: A sudden flurry of small, rapid tips can look like fraudulent bot activity.

Once the AI raises a flag—or a user files a report—the case gets passed to a human review team.

The Human Review Teams

Don't picture a friendly office of employees who understand your work. The reality is that moderation teams are often outsourced, sometimes to different countries. They work from a strict, unforgiving playbook and are trained to make lightning-fast decisions based only on the evidence in front of them.

Their job isn't to interpret your artistic intent; it's to decide if you broke a specific rule. These moderators are under immense pressure to clear a high volume of cases, which means grey areas are often decided in favour of the platform just to minimise risk.

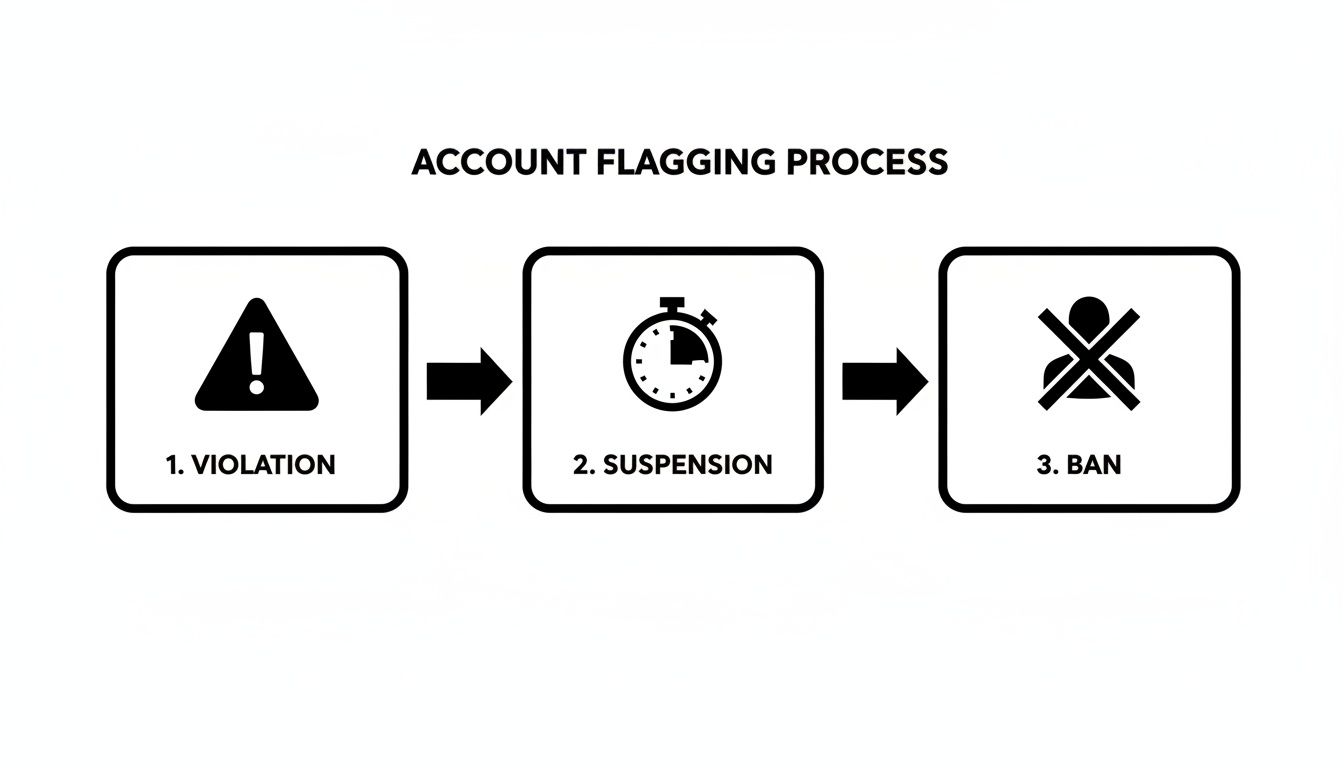

The diagram below shows the typical escalation path once a violation is confirmed.

As you can see, a single violation can quickly escalate from a simple warning to a permanent ban, often with very little room for discussion.

This reliance on automation has only intensified. In the wake of the UK's Online Safety Act, platforms scrambled to avoid hefty fines. Ofcom reported that 78% of major streaming platforms in the UK implemented AI-driven proactive moderation by early 2025, up from just 32% in 2023. As a result, a 2024 survey found that 62% of UK-based streamers had experienced account suspensions from automated flags on what platforms deemed 'high-risk' interactions.

The blunt reality is this: you are being judged by a combination of an unthinking algorithm and a human moderator who has likely never seen your stream before, has about 90 seconds to decide your fate, and is following a corporate script.

The system is inherently flawed, leading to inconsistent rulings and frustrating false positives. But by understanding how it works, you’re better equipped to avoid its tripwires. If you're curious about the broader ecosystem, you can learn more from our guide on how webcam sites work. This knowledge is your best defence.

How The UK Online Safety Act Is Changing The Game For Creators

If you’re streaming from the UK, the Online Safety Act (OSA) isn’t just legal jargon—it has rewritten the rulebook. Think of it as a new, much stricter, set of house rules for every platform operating here. The era of vague terms and sluggish enforcement is over, replaced by a high-stakes environment where platforms are directly accountable for what happens on their sites.

For creators, the most immediate impact has been the intense focus on age verification. Platforms are under massive pressure from the regulator, Ofcom, to prove they are doing everything possible to keep minors away from adult content. This is why you've likely seen verification processes become far more demanding. To get a better sense of the tech involved, check our deep dive into how age verification apps work.

The New Reality of 'Harmful Content' and Platform Liability

The real shift is platform liability. Sites are no longer passive hosts; they are legally responsible for shielding users from illegal material and, critically, for protecting children from content considered "harmful" to them. This "harmful" category is deliberately broad, covering things that are perfectly legal for adults to create and watch but might be deemed damaging if seen by a minor.

This has had a noticeable chilling effect. Platforms, fearing eye-watering fines, are now moderating with a much heavier hand. A niche or performance that was fine last year might now get flagged as too risky. The platform isn't just asking if your content is legal anymore; it's assessing whether it could create a PR disaster for them under the OSA.

The crucial takeaway for UK creators: a platform's tolerance for risk has hit rock bottom. They would rather mistakenly ban a hundred rule-abiding creators than miss a single one who could land them in hot water with Ofcom. Your account's safety is secondary to their corporate compliance.

Why Platforms Are Quicker To Ban

This new regulatory pressure has sparked a huge investment in moderation. Driven largely by the OSA, the UK's content moderation market is projected to reach £1.2 billion by 2026. High-risk services like cam platforms now dedicate an average of 22% of their operational budget to moderation—a staggering 62% increase since 2023. This translates directly into more aggressive AI filters and larger human review teams. You can see more details on the growing content moderation market over at Mordor Intelligence.

This is why the ban hammer seems to be falling faster and harder. Automated systems are calibrated to be overly cautious. Human reviewers work from strict, unforgiving guidelines. The aim is no longer just managing a community; it’s demonstrating absolute compliance to regulators. For you, this means far less room for error and fewer second chances.

Online Safety Act Impact On UK Cam Platform Rules

| Area of Change | Previous Standard (Pre-OSA) | Current Standard (Post-OSA) | What This Means For Creators |

|---|---|---|---|

| Age Verification | Basic ID checks, often with lenient reviews. | Stringent, multi-layered verification using biometrics and AI. Mandatory for all UK users. | Expect tougher, more frequent identity checks. Any doubt leads to suspension. |

| Content Moderation | Primarily reactive, based on user reports. | Proactive and aggressive, using AI and human teams to pre-screen for "harmful content". | Your content is scrutinised more than ever. What was acceptable yesterday may be banned today. |

| Platform Liability | Platforms were 'hosts' with limited legal responsibility. | Platforms are legally liable for user safety and face massive fines from Ofcom. | Platforms are extremely risk-averse. They will err on the side of banning to protect themselves. |

| Appeals Process | Often slow, inconsistent, and with no clear process. | Platforms must provide clear, robust, and accessible appeals processes. | You have a stronger right to appeal, but the initial decision is likely to be harsher. |

Ultimately, the OSA places the burden of proof squarely on the platforms, and by extension, on you. Navigating this new environment requires more diligence than ever before.

How to Appeal a Ban or Suspension and Actually Win

That notification hits your stomach like a ton of bricks: "Your account has been suspended." It’s every creator’s worst nightmare. Your first instinct might be to panic and hammer out a furious email. Honestly, that’s the single worst thing you can do.

Winning an appeal isn't about letting your emotions fly; it’s a strategic game. There are no guarantees, but approaching it with a cool head and a solid plan dramatically improves your chances. Think of it less like a shouting match and more like building a legal case where you are your own best advocate.

First step? Stop. Don't touch that appeal button. Take a breath and fight the urge to react on impulse. An appeal riddled with rage or vague claims like "I didn't do anything wrong!" is going straight to the bin.

Step 1: Gather Your Evidence Like a Detective

Before you write a word, assemble your case file. Your memory isn't enough—you need proof. Your mission is to find anything that backs up your side of the story or shows that the moderation, whether person or algorithm, got it wrong.

Your evidence folder checklist:

- The Notification: Screenshot the suspension notice immediately. Look closely at the specific rule they claim you violated.

- Chat Logs: If the problem started with a user interaction, grab screenshots of the conversation. This is your golden ticket for proving harassment or showing context an AI might have missed.

- Stream Recordings: If you record your streams locally (always a good idea), you have the ultimate alibi.

- Profile Screenshots: Snap pictures of your bio and stream titles to prove you weren't using banned keywords.

Step 2: Craft Your Appeal with Precision

Now it’s time to write. Your appeal needs to be professional, concise, and stripped of emotion. Remember, you're writing to an overworked moderator who has a minute, tops, to decide your fate.

The most effective appeal is a boring one. It should read like a business email, not a desperate plea. Stick to the facts, reference the rules, and make it incredibly easy for the moderator to agree with you and move on.

Follow this structure:

- State Your Identity: Start with your username and the suspension date.

- Acknowledge the Violation: Clearly state the rule they cited. "My account was suspended for an alleged violation of section 4.b of the Terms of Service regarding off-platform communication." This shows you've actually read their notice.

- Present Your Counter-Argument Calmly: Refute their claim using your evidence. "The suspension notice mentions a user report. As you can see from the attached chat log (screenshot_A.jpg), the user was demanding I break the rules, and I clearly refused."

- Reference Their Own Rules: Quote the platform’s terms of service back at them. Show how your actions were actually in line with the very moderation rules on cam platforms they're meant to enforce.

- Close Professionally: End with a simple request. "I believe this was a misunderstanding and respectfully request my account be reinstated. Thank you for your time."

Step 3: Know What Gets an Appeal Instantly Rejected

Avoid these common mistakes that guarantee your appeal goes in the trash:

- Emotional Rants: Any sign of anger, threats, or insults will get your ticket closed.

- Blaming Users: Even if a user was at fault, your focus is on how you followed the rules.

- Vague Excuses: "I didn't know that was a rule" is never a valid defence.

- Lying: If you genuinely broke a rule, don't deny it. A sincere apology can sometimes work wonders, but dishonesty never does.

At the end of the day, you're dealing with a flawed system. Sometimes, even the perfect appeal won't succeed. But by being methodical and professional, you give yourself the best possible shot at getting back online.

Your Creator's Moderation Survival Checklist

All this talk of rules, bots, and bans can feel a bit much. So, let's break it down into a simple, actionable game plan. Think of this as your pre-stream ritual—a routine that will keep your channel safe, your income steady, and your stress levels low.

The key to a long career is treating moderation as a professional skill, not just a set of annoying restrictions. It’s about taking charge of your workspace and managing risk before it becomes a problem. This isn't paranoia; it's professionalism.

Your Monthly Moderation Ritual

Set a calendar reminder for a quick check-in once a month. It’s like checking the smoke alarms; a few minutes on prevention can save you from a major headache.

Skim the Terms of Service (TOS): No one expects you to read the whole legal document. Just look for a "Last Updated" date. If it’s new, use your browser's search function (Ctrl+F) to look for keywords like "payment," "content," or "conduct" to spot the changes that matter.

Review Your Content Tags: Are your tags still accurate? Tagging your stream incorrectly is one of the easiest ways to get flagged by mistake. Make sure they genuinely reflect what you're doing.

Test Your Chat Bots and Filters: Are your automated tools still working? Noticed new spammy phrases in chat? Add them to your filter list to keep your room clean.

In-Stream Best Practices

These are the habits that protect you while you're live, helping you handle the unpredictable nature of streaming and the tricky details of moderation rules on cam platforms.

Your stream is your business, and the chat is your storefront. You wouldn't let a troublemaker cause chaos in a physical shop, so don't tolerate it in your digital one. Set the tone from the start and own your space.

Establish a Clear Off-Platform Policy: Figure out exactly how you want to interact with fans away from the site. Make your rules clear, stick to them, and never discuss payments or explicit content in public chat. This avoids grey areas that could be misinterpreted.

Keep Meticulous Records: If a user gets weird or aggressive, screenshot it immediately. Save chat logs from anyone who makes you uncomfortable or pushes your boundaries. This evidence is gold if they try to file a bogus report against you.

Know the Appeals Process in Advance: The worst time to figure out the appeals process is right after you've been suspended. Find the support section now and bookmark the link. Knowing the steps before you need them lets you act quickly and clearly if something goes wrong.

Frequently Asked Questions About Cam Moderation

We've walked through the systems, the rules, and how to navigate them. Now, let’s get straight to the point and tackle some of the most common questions that come up time and time again about moderation rules on cam platforms.

Can I swear or use explicit language on stream?

In most cases, yes—but context is everything. Platforms for adult audiences generally get that adult language is part of the territory. The line is usually drawn at hate speech, slurs, or direct harassment.

Think of it like being in a pub. A bit of colourful language is fine, but screaming targeted insults will get you kicked out. Remember that your stream titles and bios are often under a stricter microscope, as they're public-facing and easily scanned by filters.

What happens if a viewer breaks the rules in my chat?

You’re the captain of your own ship. Platforms expect you to moderate your own channel. If a viewer starts spamming, being abusive, or soliciting, use your tools—mute, kick, or ban them right away.

A proactive approach is your best defence. If you let rule-breaking run wild in your chat, the platform might see it as you endorsing that behaviour, putting your own account on the line. Don't be shy with that block button; it’s there for a reason.

Can platforms moderate my private messages?

Yes, absolutely. It's a huge misconception that private messages (PMs) are a lawless free-for-all. They're not. If a user reports a conversation for rule-breaking, like harassment or taking business off-site, the moderation team has the right to review those message logs.

Never think of your PMs as a secret backchannel for breaking public rules. The same code of conduct applies everywhere.

Is it a violation to stream when I've been drinking?

This is a real grey area and differs massively from site to site. Some platforms have a zero-tolerance policy against appearing intoxicated, viewing it as a potential consent issue. Others are more relaxed, as long as your behaviour doesn't cross other lines.

Your safest bet is to dig into your specific platform’s TOS on this one. When in doubt, play it safe. An automated system can’t tell the difference between tipsy and seriously impaired, and you don’t want to risk a suspension over it. It’s always better to stay professional and in control.